Table of Contents

| Section I – Financial/Administrative Performance | 4 |

| IceCube NSF M&O Award Budget, Actual Cost and Forecast | 5 |

| IceCube M&O Common Fund Contributions | 5 |

| Section II – Maintenance and Operations Status and Performance | 6 |

| Detector Operations and Maintenance | 6 |

| Computing and Data Management Services | 11 |

| Data Release | 18 |

| Data Processing and Simulation Services | 19 |

| IceCube Software Coordination | 19 |

| Calibration | 22 |

| Program Management | 23 |

| IceCube M&O – PY3 (FY2018/2019) Milestones Status: | 23 |

| Section III – Project Governance and Upcoming Events | 28 |

| Acronym List | 28 |

| Section I – Financial/Administrative Performance | |

| The University of Wisconsin–Madison is maintaining three separate accounts with supporting charge numbers for collecting IceCube M&O funding and reporting related costs: 1) NSF M&O Core account, 2) U.S. Common Fund account, and 3) Non-U.S. Common Fund account. | |

| A total amount of $7,000,000 was released to UW–Madison to cover the costs of maintenance and operations in PY3 (FY2018/FY2019): $1,064,700 was directed to the U.S. Common Fund account based on the number of U.S. Ph.D Authors in the last version of the institutional MoU’s, and the remaining $5,935,300 was directed to the IceCube M&O Core account (Table 1). An additional $291,712 FY2019 funding was awarded to support an IceCube M&O supplemental proposal to further develop talent for STEM research, increase diversity and inclusion in science and related fields, and connect research-based skills and technology to societal challenges. | |

PY3: FY2018 / FY2019

|

Funds Awarded to UW for Apr 1, 2018 – March 31, 2019

|

IceCube M&O Core account

|

$5,935,300

|

U.S. Common Fund account

|

$1,064,700

|

TOTAL NSF Funds

|

$7,000,000

|

|

Major Responsibilities

|

Funds

|

Lawrence Berkeley National Laboratory

|

|

$91,212

|

Pennsylvania State University

|

|

$70,847

|

University of Delaware, Bartol Institute

|

|

$149,265

|

University of Maryland at College Park

|

|

$603,369

|

University of Alabama at Tuscaloosa

|

|

$24,592

|

Michigan State University

|

Simulation software, simulation production

|

$28,807

|

South Dakota School of Mines and Technology

(added in July 2017)

|

Simulation production and reconstruction

|

$00.00

|

Total

|

|

$968,092

|

IceCube NSF M&O Award Budget, Actual Cost and Forecast

The current IceCube NSF M&O 5-year award was established in the middle of Federal Fiscal Year 2016, on April 1, 2016. The following table presents the financial status ten months into the Year 3 of the award, and shows an estimated balance at the end of PY3.

Total awarded funds to the University of Wisconsin (UW) for supporting IceCube M&O from the beginning of PY1 through the end of PY3 are $21,360K (including the supplemental funding of $67,999 in PY2 and $291,712 in PY3). Total actual cost as of January 31, 2019 is $19,427K and open commitments against purchase orders and subaward agreements are $854K. The current balance as of January 31, 2019 is $1,079K. With a projection of $1,146K for the remaining expenses during the final two months of PY3, the estimated negative balance at the end of PY3 is -$67K, which is 0.3% of the PY3 budget (Table 3).

(a)

|

(b)

|

(c)

|

(d)= a - b - c

|

(e)

|

(f) = d – e

|

YEARS 1-3 Budget

Apr.’16-Mar.’19 |

Actual Cost To Date through

Jan 31, 2019 |

Open Commitments

on Jan 31, 2019 |

Current Balance

on Jan 31, 2019 |

Remaining Projected Expenses

through Mar. 2019 |

End of PY3 Forecast Balance on Mar. 31, 2019

|

$21,360K

|

$19,427K

|

$854K

|

$1,079K

|

$1,146K

|

-$67K

|

IceCube M&O Common Fund Contributions

The IceCube M&O Common Fund was established to enable collaborating institutions to contribute to the costs of maintaining the computing hardware and software required to manage experimental data prior to processing for analysis.

Each institution contributes to the Common Fund, based on the total number of the institution’s Ph.D. authors, at the established rate of $13,650 per Ph.D. author. The Collaboration updates t he Ph.D. author count twice a year before each collaboration meeting in conjunction with the update to the IceCube Memorandum of Understanding for M&O.

The M&O activities identified as appropriate for support from the Common Fund are those core activities that are agreed to be of common necessity for reliable operation of the IceCube detector and computing infrastructure and are listed in the Maintenance & Operations Plan.

Table 4 summarizes the planned and actual Common Fund contributions for the period of April 1, 2018–March 31, 2019, based on v24.0 of the IceCube Institutional Memorandum of Understanding, from May 2018 .

Ph.D. Authors

|

Planned Contribution

|

Actual Received

|

||

Total Common Funds

|

138

|

$1,883,700

|

$1,575,368

|

|

U.S. Contribution

|

71

|

$969,150

|

$969,150

|

|

Non-U.S. Contribution

|

66

|

$900,000

|

$606,218*

|

|

Detector Operations

and Maintenance

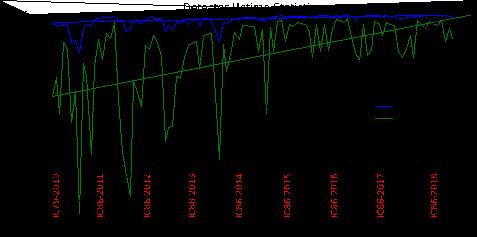

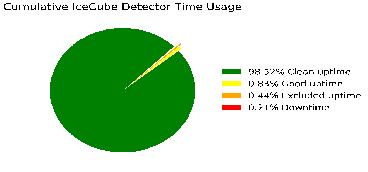

Detector Performance — During the period from April 1, 2018 to January 31, 2019, the detector uptime, defined as the fraction of the total time that some portion of IceCube was taking data, was 99.79%, exceeding our target of 99% and close to the maximum possible, given our current data acquisition system. The clean uptime for this period, indicating full-detector analysis-ready data, was 98.52%, exceeding our target of 95%. Historical total and clean uptimes of the detector are shown in Figure 1.

Figure 2 shows a breakdown of the detector time usage over the reporting period. The partial-detector good uptime was 0.83% of the total and includes analysis-ready data with fewer than all 86 strings. Excluded uptime includes maintenance, commissioning, and verification data and required 0.44% of detector time. The unexpected detector downtime was limited to 0.21%.

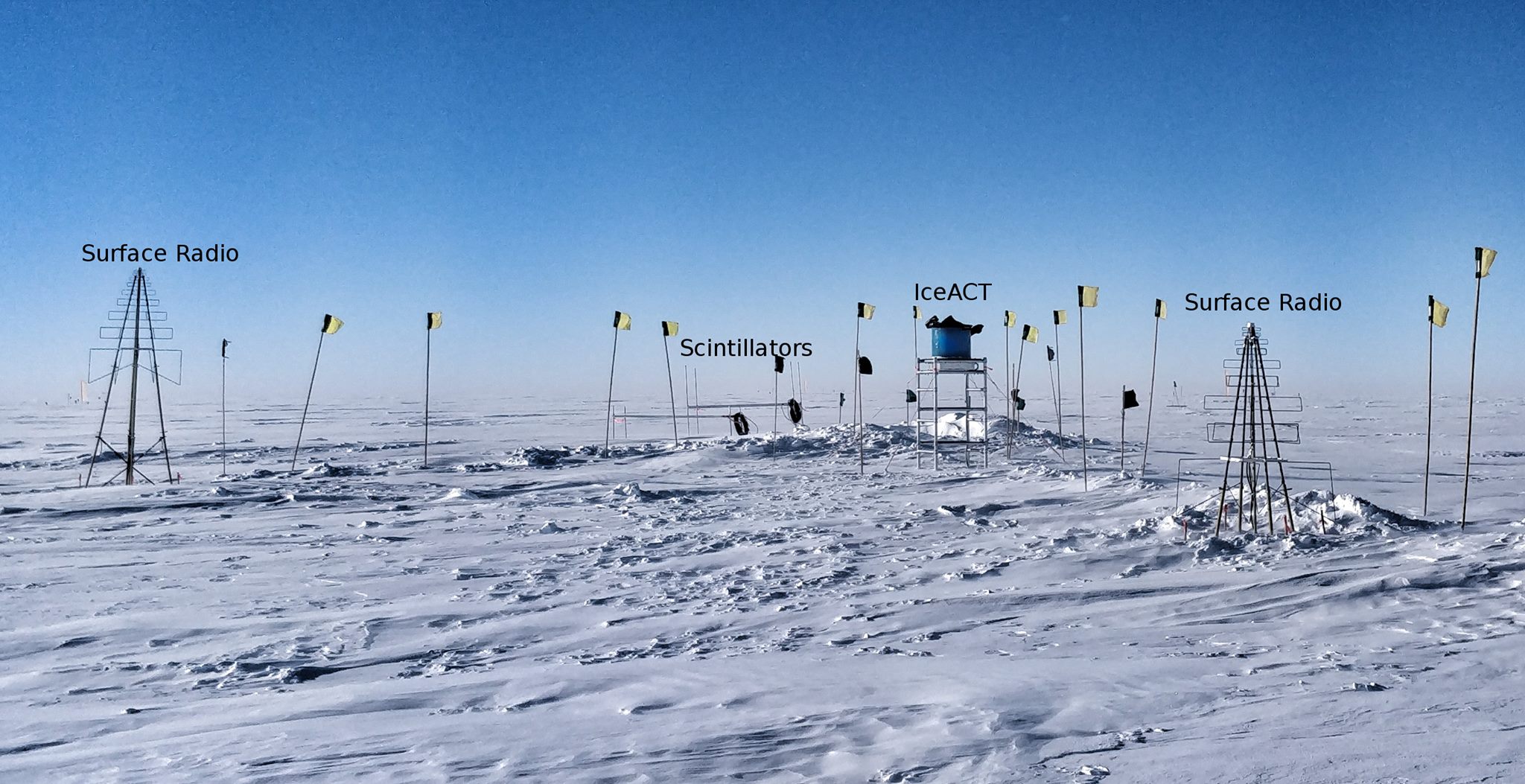

· Delivery of the pDAQ:Tyranena release in April 2018 and subsequent patch releases, which improve start-of-run behavior and fix a bug in the Simple Multiplicity Trigger affecting only the IceACT trigger.

· Delivery of the pDAQ:Urban_Harvest release in October 2018, which improves DAQ–IceCube Live interaction and DOM memory overflow reporting.

· Work towards the 2019 releases, which will address lingering component stalls at run starts and optimize the trigger and hit sorting components for multicore architectures.

Online Filtering — The online filtering system (“PnF”) performs real-time reconstruction and selection of events collected by the data acquisition system and sends them for transmission north via the data movement system. In addition to the standard filter changes to support the IC86–2018 physics run start, a new version of the realtime event processing system was released that streamlines event selection and supports additional types of neutrino alerts to a network of multi-messenger observatories.

Detector Monitoring and Experiment Control — Development of IceCube Live, the experiment control and monitoring system is transitioning to a maintenance phase after several major feature releases in 2017. This reporting period has seen one major release with the following highlighted features, along with several minor releases through January 2019:

· Live v3.2 (May 2018): Highlights include an improved “billboard” status page (Fig. 4) and a faster run history page.

· Subsequent minor releases, up to v3.2.3 in January 2019, include automatic run configuration failover in the case of Acopian power supply failures; a new command-line tool to simplify I3Live administration; and internal data migration to a new database format.

· Development toward the 2019 releases, including preparation for an upcoming Python version upgrade.

Computing and Data Management Services

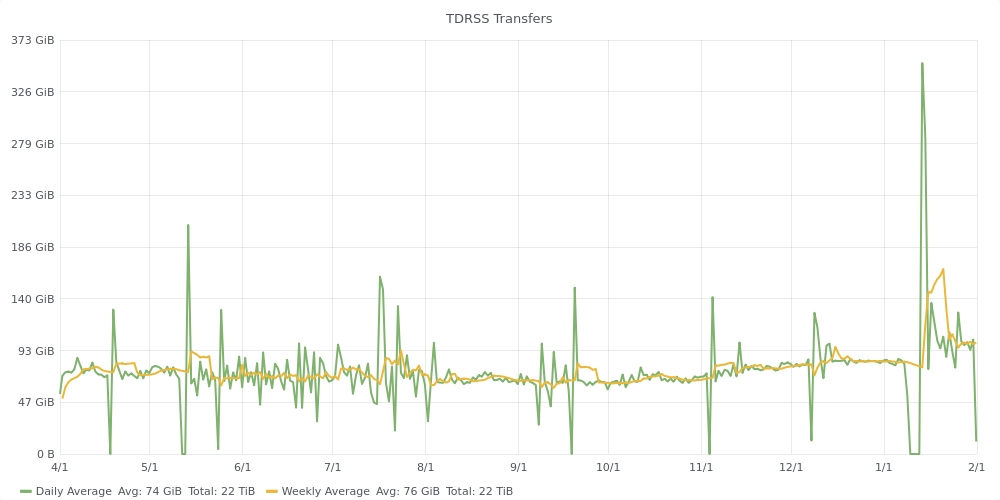

Data Transfer – Data transfer has performed nominally over the past ten months. Between April 2018 and January 2019 a total of 22.3 TiB of data were transferred from the South Pole to UW-Madison via TDRSS, at an average rate of 74.5 GiB/day. Figure 1 shows the daily satellite transfer rate and weekly average satellite transfer rate in 76 GB/day through January 2019. The IC86 filtered physics data are responsible for 95% of the bandwidth usage.

Since September 2016 the JADE software handles all the IceCube data flows: disk archive at the South Pole, satellite transfer to UW-Madison and long term archive to tape libraries at NERSC and DESY. JADE continues to operate smoothly and has been an effective tool for handling a variety of our routine data movement workflows. This has been confirmed over the last year with experience from both the Winterovers and IT staff at UW-Madison.

Figure 1: TDRSS Data Transfer Rates, April 1, 2018–Janurary 31, 2019. The daily transferred volumes are shown in green and, superimposed in yellow, the weekly average daily rates are also displayed.

Data Archive – The IceCube raw data is archived by writing two copies on independent hard disks. During the reporting period (April 2018 to January 2019) a total of 306 TiB of unique data were archived to disk averaging 1 TiB/day.

In May 2018, the set of archival disks containing the raw data taken by IceCube during 2017 was received at UW-Madison. These disks are processed using JADE which now indexes the metadata, bundles the data files into chunks suitable for storage in tape libraries, and replicates the data to the long-term archives at DESY and NERSC.

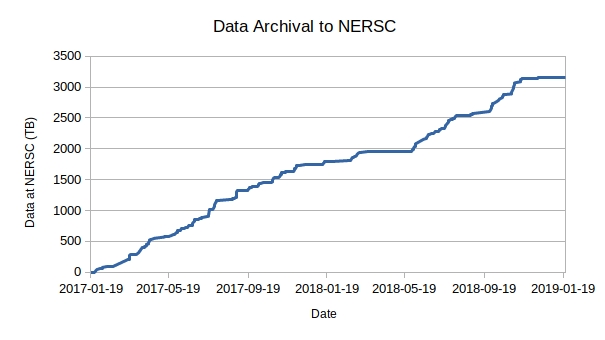

Beginning in September 2016 we have been regularly transferring archival data to NERSC. At this time, the total volume of data archived at NERSC is 3.15 PB. Figure 7 shows the rate at which data has been archived to NERSC since the start of this service. The plan is to keep this archive stream constantly active while working on further JADE functionality that will allow us to steadily increase the performance and automation of this long term archive data flow.

Figure 2: Volume of IceCube data archived at the NERSC tape facility by the JADE Long Term Archive service as a function of time.

Computing Infrastructure at UW-Madison

The IceCube computing cluster at UW-Madison has continued to deliver reliable data processing services. Boosting the GPU computing capacity has been a high priority activity since the Collaboration decided to use GPUs for the photon propagation part of the simulation chain in 2012. Direct photon propagation provides the precision required, and is very well suited to GPU hardware, running about 100 times faster than in CPUs. In addition, we have been decommissioning the data center facility at our 222 West Washington Avenue location, and relocating the equipment to a commercial facility leased by UW-Madison.

The facility is located approximately seven miles from our 222 West Washington Avenue location. It is a commercial facility offering redundant battery-backed generator power and biometric physical security. UW-Madison leases space, power, and cooling in this facility to support internal needs as well as project needs for the campus as a whole. As such, they have extended the campus high speed networks to the facility which offers us easier integration with the rest of our campus systems without the higher costs typically associated with commercial rates for network transit. In particular, when the project is complete, we will have a full 100 Gb/s network path between this facility and our other facilities on campus.

To facilitate the data center relocation, we purchased and deployed a storage system with 9.6 PB raw capacity (7.2 PB usable) in the new facility. This provided sufficient usable capacity to enable us to consolidate our filesystem infrastructure as well as simplifying the effort of migrating data to the new location. The new equipment was deployed and available in May of 2018. Filesystem configuration, testing, and validation completed at the end of June, and the data transfer began in July. By mid-August, the transfer of 6.0 PB had completed and this now serves as the primary storage for experimental, simulation, and analysis data.

The total amount of data stored on disk in the data warehouse at UW-Madison is 6.8 PB: 2.1 PB for experimental data, 4.4 PB for simulation and analysis and 283 TB for user data.

While storage comprises the largest consumer of physical space, there are a number of other systems which will also be migrated to the new facility. The work to do this will be ongoing and is expected to be complete in the first half of 2019. As data and services are migrated to the new facility, hardware that is still under warranty will be relocated. Older hardware that is still usable but out of support will be re-purposed for testing or moved into the compute cluster as needed. Any old hardware not needed for these purposes will be disposed of.

The other focus has been the continued expansion of the GPU cluster is to provide the capacity to meet the Collaboration direct photon propagation simulation needs. Still, the GPU needs have been estimated to be higher than the capacity of the GPU cluster at UW-Madison. Additional GPU resources at several IceCube sites, plus specific supercomputer allocations, allow us to try and reach that required capacity. IceCube has submitted proposal to Phase I of Internet2’s Exploring Clouds for Acceleration of Science (E-CAS) project for $100,000 in credits with Amazon Web Services and Google Cloud Platform and 0.4 FTE. We are also exploring other proposals for dedicated GPU compute infrastructure.

Distributed Computing - In March 2016, a new procedure to formally gather computing pledges from collaborating institutions was started. This data is collected twice a year as part of the already existing process by which every IceCube institution updates its MoU before the Collaboration week meeting. Institutions that pledge computing resources for IceCube are asked to provide information on the average number of CPUs and GPUs that they commit to provide for IceCube simulation production during the next period. Table 1 shows the computing pledges per institution as of September 2018:

Site

|

Pledged CPUs

|

Pledged GPUs

|

Aachen

|

27700*

|

44*

|

Alabama

|

6

|

|

Alberta

|

1400

|

178

|

Brussels

|

1000

|

14

|

Chiba

|

196

|

6

|

Delaware

|

272

|

|

DESY-ZN

|

1400

|

180

|

Dortmund

|

1300*

|

40*

|

LBNL

|

114

|

|

Mainz

|

1000

|

300

|

Marquette

|

96

|

16

|

MSU

|

500

|

8

|

NBI

|

10

|

|

Penn State

|

3200*

|

101*

|

Queen’s

|

55

|

|

Uppsala

|

10

|

|

UMD

|

350

|

112

|

UTA

|

50

|

|

UW-Madison

|

7000

|

440

|

Wuppertal

|

300

|

|

TOTAL (exclusive)

|

13688

|

1325

|

TOTAL (all)

|

45888

|

1510

|

Personnel

We have had significant personnel changes in 2018. Heath Skarlupka returned to the private sector in May of 2018. Our web developer, Chad Sebranek took a new position on campus in August of 2018. David Schultz was hired into a new role as software lead developer and team lead. Finally, Gonzalo Merino took a new position at PIC and left IceCube in August of 2018. A search was conducted for a new IceCube Computing Lead. Benedikt Riedel assumed this role beginning December 2018.

Data Release

IceCube is committed to the goal of releasing data to the scientific community. The following links contain data sets produced by AMANDA/IceCube researchers along with a basic description. Due to challenging demands on event reconstruction, background rejection and systematic effects, data is released after the main analyses are completed and results are published by the IceCube Collaboration.

Since summer 2016, thanks to UW-Madison subscribing to the EZID

6

service we have the capability of issuing persistent identifiers for datasets. These are Digital Object Identifiers (DOI) that follow the DataCite metadata standard

7

.

We are in the process of rolling out a process for ensuring that all datasets made public by IceCube have a DOI and use the DataCite metadata standard capability to “link” it to the associated publication, whenever this is applicable. The use of DataCite DOIs to identify IceCube public datasets increases their visibility by making them discoverable in the search.datacite.org portal (see

https://search.datacite.org/works?resource-type-id=dataset&query=icecube

)

1. IceCube data from 2008 to 2017 related to analysis of TXS 0506+056

2. IceCube catalog of alert events up through IceCube-170922A

· https://doi.org/10.21234/B4KS6S3. Measurement of atmospheric neutrino oscillations with three years of data from the full sky

· https://doi.org/10.21234/B4105H

4. A combined maximum-likelihood analysis of the astrophysical neutrino flux:

· https://doi.org/10.21234/B4WC7T

6. Search for point sources with first year of IC86 data:

· https://doi.org/10.21234/B4159R

7. Search for sterile neutrinos with one year of IceCube data:

· http://icecube.wisc.edu/science/data/IC86-sterile-neutrino

8. The 79-string IceCube search for dark matter:

· http://icecube.wisc.edu/science/data/ic79-solar-wimp

9. Observation of Astrophysical Neutrinos in Four Years of IceCube Data:

· http://icecube.wisc.edu/science/data/HE-nu-2010-2014

10. Astrophysical muon neutrino flux in the northern sky with 2 years of IceCube data:

· https://icecube.wisc.edu/science/data/HE_NuMu_diffuse

11. IceCube-59: Search for point sources using muon events:

· https://icecube.wisc.edu/science/data/IC59-point-source

12. Search for contained neutrino events at energies greater than 1 TeV in 2 years of data:

· http://icecube.wisc.edu/science/data/HEnu_above1tev

13. IceCube Oscillations: 3 years muon neutrino disappearance data:

· http://icecube.wisc.edu/science/data/nu_osc

14. Search for contained neutrino events at energies above 30 TeV in 2 years of data:

· http://icecube.wisc.edu/science/data/HE-nu-2010-2012

15. IceCube String 40 Data:

· http://icecube.wisc.edu/science/data/ic40

16. IceCube String 22–Solar WIMP Data:

· http://icecube.wisc.edu/science/data/ic22-solar-wimp

17. AMANDA 7 Year Data:

http://icecube.wisc.edu/science/data/amanda

Data Processing and Simulation Services

Data Reprocessing – At the end of 2012, the IceCube Collaboration agreed to store the compressed SuperDST as part of the long-term archive of IceCube data. The decision taken was that this change would be implemented from the IC86-2011 run onwards. A server and a partition of the main tape library for input were dedicated to this data reprocessing task. Raw tapes are read to disk and the raw data files processed into SuperDST, which is saved in the data warehouse. Now that all tapes from the Pole are in hand, we plan to complete the last 10% of this reprocessing task. The total number of files for seasons IC86-2011, IC86-2012 and IC86-2013 is 695,875; we have 67,812 remaining to be processed. The file breakdown per year is as follows: IC86-2011: 221,687 already processed out of 236,611. IC86-2012: 215,934 processed out of 222,952. IC86-2013: 190,442 processed out of 236,312. About 58,000 of the remaining files are in about 100 tapes; the rest are spread over about another 350. Tape dumping procedures are being integrated with the copy of raw data to NERSC .

Offline Data Filtering – The data collection for the IC86-2018 season started on July 10, 2018. A new compilation of data processing scripts had been previously validated and benchmarked with the data taken during the 24-hour test run using the new configuration. The differences with respect to the IC86-2017 season scripts are minimal. Therefore, we estimate that the resources required for the offline production will be about 750,000 CPU hours on the IceCube cluster at UW-Madison datacenter. 100 TB of storage is required to store both the Pole-filtered input data and the output data resulting from the offline production. We were able to reduce the required storage by utilizing a more efficient compression algorithm in offline production. Since season start we are using a new database structure at pole and in Madison for offline production. The data processing is proceeding smoothly and no major issues occurred. Level2 data are typically available one and a half weeks after data taking.

Additional data validations have been added to detect data value issues and corruption. Replication of all the data at the DESY-Zeuthen collaborating institution is being done in a timely manner. We are currently reviewing existing filters and reconstructions with the aim of streamlining offline processing at Level 2 and Level 3.

The re-processing (pass2) has started on June 1st, 2017, and completed in August of 2018. Seven years (2010 - 2016) are currently re-processed. Four years start at sDST level (2011 - 2014) and three years at raw data. Starting at raw data was required for 2010 since sDST data was not available. Since sDST data for 2015 and 2016 has already been SPE corrected, a re-processing of sDST data was required in order to apply the latest SPE fits as we perform for the other seasons.

The reprocessing of pass2 utilized 10,905,951 CPU hours and 520 TB storage for sDST and Level2 data. An additional 2,000,000 CPU hours and 30 TB storage were required process the pass2 Level2 data to Level3.Simulation – The production of IC86 Monte Carlo simulations of the IC86-2016 detector configuration began in October 2016. This configuration is representative of previous trigger and filter configurations from 2012-2018 and is consistent with Pass2 data reprocessing. As with previous productions, direct generation of Level 2 background simulation data is used to reduce storage space requirements. Additional changes allow us omit details of stochastic energy loses in the final output and to save the state of the pseudo-random number generator instead. This allows users to regenerate the propagated Monte Carlo truth when needed. As part of the new production plan, intermediate photon-propagated data is now being stored on disk in DESY and reused for different detector configurations in order to reduce GPU requirements. This transition to the 2016 configuration was done in conjunction with a switch to IceSim 5 which contains improvements in memory and GPU utilization in addition to previous improvements to correlated noise generation, Earth modeling, and lepton propagation. Current simulations are running on IceSim 6. 1.0 with further improvements and bug fixes for various modules. Direct photon propagation is currently done on dedicated GPU hardware located at several IceCube Collaboration sites and through opportunistic grid computing where the number of such resources continues to grow. We are currently working to implement additional optimizations in the simulation chain that take advantage of resent changes to the CORSIKA framework in order to do importance sampling of parameter space in order to better utilize computing resources.

The simulation production team organizes periodic workshops to explore better and more efficient ways of meeting the simulation needs of the analyzers. This includes both software improvements as well as new strategies and providing the tools to generate targeted simulations optimized for individual analyses instead of a one-size-fits-all approach.

The centralized production of Monte Carlo simulations has moved away from running separate instances of IceProd to a single central instance that relies on GlideIns running at satellite sites. Production has been transitioning to a newly redesigned simulation scheduling system IceProd2. A full transition to IceProd 2 was completed during the Spring 2017 Collaboration Meeting. Production throughput on IceProd2 has continually increased due to incorporation of an increasing number of dedicated and opportunistic resources and a number of code optimizations. A new set of monitoring tools is currently being developed in order to keep track of efficiency and further optimizations.

Personnel – Kevin Meagher joined the simulation production team replacing David Delventhal who left in the summer 2017. David Schultz has taken a new position with the computing department but will continue to provide support for IceProd2.

IceCube Software Coordination

The software systems spanning the IceCube Neutrino Observatory, from embedded data acquisition code to high-level scientific data processing, benefit from concerted efforts to manage their complexity. In addition to providing comprehensive guidance for the development and maintenance of the software, the IceCube Software Coordinator, Alex Olivas, works in conjunction with the IceCube Coordination Committee, the IceCube Maintenance and Operations Leads, the Analysis Coordinator, and the Working Group Leads to respond to current operational and analysis needs and to plan for anticipated evolution of the IceCube software systems. In the last year, software working group leads have been appointed to the following groups: core software, simulation, reconstruction, science support, and infra-structure. Continuing efforts are underway to ensure the software group is optimizing in-kind contributions to the development and maintenance of IceCube's physics software stack.

The IceCube collaboration contributes software development labor via the biannual MoU updates. Software code sprints are organized seasonally (i.e. 4 times per year) with the software developers to prepare for software releases. Progress is tracked, among other means, by tracking open software tickets tied to seasonal milestones. The IceCube software group has several major projects, labeled as ‘on-going’ that are nearing completion:

· Simulation support for systematic features, critical for oscillation analyses (on-going);

· Delivered cable shadow feature to the calibration and oscillations groups for systematic studies;

· Reducing memory usage in simulation production (on-going), with one project about to be delivered and another project tasked to a new graduate student;

· The software coordinator ran a mini-bootcamp on C++;

· The software coordinator is currently running a Multi-threading in C+ bootcamp, integral to preparation for the next generation of IceTray;

· A system to display histograms generated in mass production is being improved with a dataset comparison tool (on-going);

· A contiuous integration and delivery (CI/CD) system is close to being delivered;

· A new simulation model is currently under development to achieve more efficient simulations by sampling important parameter space instead of brute force methods to simulate easily identified background cosmic-ray showers. This dynamic-stack CORSIKA framework provides a realistic path to achieve a rate of simulation production comparable to that of data taking.

Calibration

Using single-flashing LED data collected in the last fiscal year, we continue to refine measurements of the ice properties. The pointing of each of the 12 LEDs has been reconstructed from this data, and is known with better than 1º precision for most DOMs. Previously, the unknown pointing and intensity of each LED contributed substantially to the overall error in the fitting of ice model parameters. Therefore, all relevant ice model parameters, such as scattering and absorption coefficients, have now been recalibrated. Reduced errors on these measurements are also expected and are currently being evaluated. This data was also used to determine the relative position of the main cable, which shadows the photocathode in an azimuthally asymmetric way, for each DOM. The cable position is now included in a database, and can be simulated with IceCube software.

Data was collected with the last movable camera system in late January 2018. The camera was pointed in the direction of neighboring strings in an attempt to visualize LEDs flashing in DOMs approximately 40 m away. After these runs completed, the motor on this last camera failed, with the field of view pointing into the waistband of the pressure sphere. Post-processing of the collected images has failed to localize the flashing LEDs, where the signal-to-noise ratio appears to be insufficient to detect the light. A simulation program for modeling the response of the camera system has been developed, and will be used to extract more quantitative measurements from archival images than was previously possible. The same simulation program is also being used to analyse images taken by cameras deployed in the SPICEcore borehole in December 2018, and will be used for the IceCube Upgrade camera image analysis as well.

Significant improvements have been made in the modeling of individual DOM response to single photo-electrons (SPE). A large sample of SPE waveforms have been collected for each DOM, and their charge reconstructed. Significant variations in the SPE charge distribution are observed DOM-to-DOM, which can have important consequences for the detection and reconstruction of MeV- GeV scale physics events. The individual SPE distribution for each DOM is now used to model the charge response in IceCube simulations, improving agreement with real data. A publication summarizing this analysis and results is in progress.

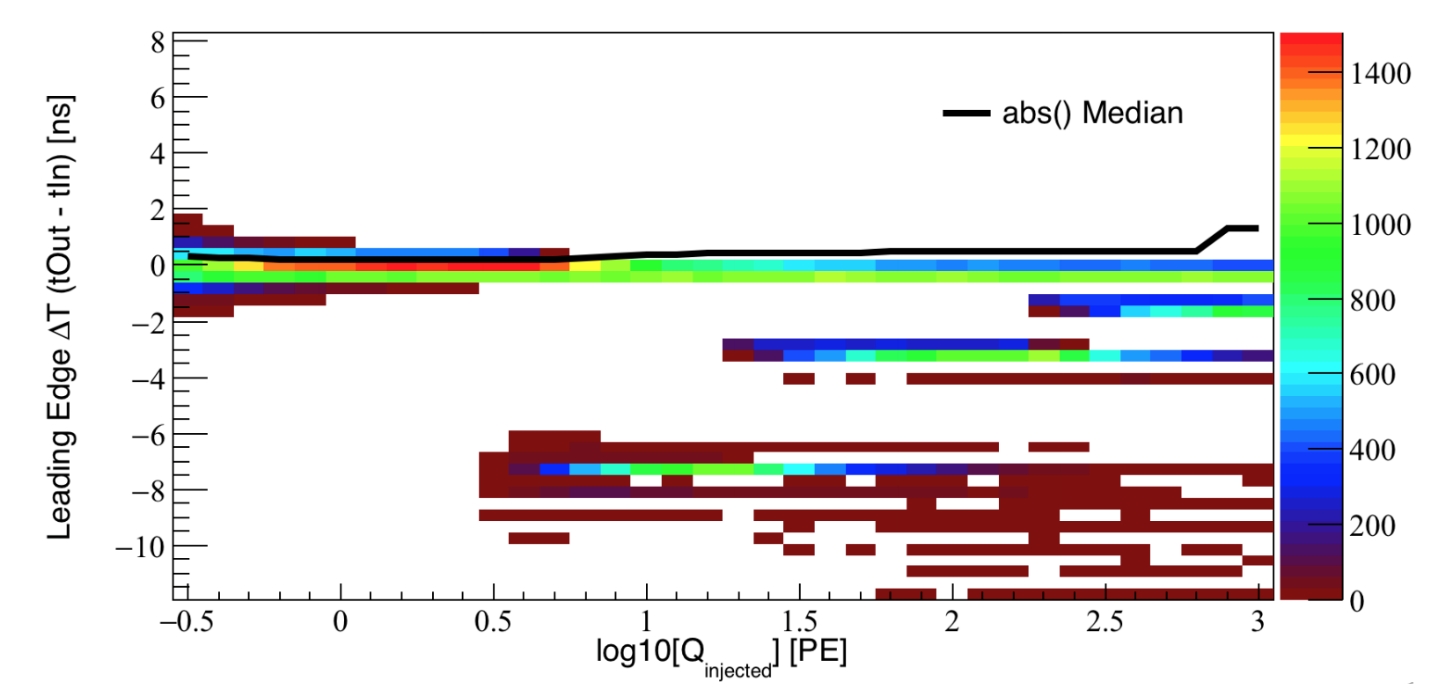

The IceCube waveform reconstruction uses an unfolding procedure, where the observed waveform is deconstructed into a series of scaled, single-photon pulse templates shifted in time. A feature of this unfolding procedure was found to inaccurately split waveforms, resulting in small amplitude pulses typically 7 ns earlier than the rest of the waveform as shown in Figure 3. A solution was developed that results in a slower, yet more accurate waveform reconstruction. The impact of this early-pulse splitting behaviour on high-level event reconstructions is currently being investigated by the collaboration to determine if a reprocessing of all data is warranted.

Figure 3: The reconstructed waveform leading edge time is compared to the simulated true leading edge time as a function of the injected total charge in the waveform. Early pulses are visible with ΔT << 0.

Program Management

Management & Administration – The primary management and administration effort is to ensure that tasks are properly defined and assigned, that the resources needed to perform each task are available when needed, and that resource efficiency is tracked to accomplish the task requirements and achieve IceCube’s scientific objectives. Efforts include:

· A complete re-baseline of the IceCube M&O Work Breakdown Structure to reflect the structure of the principal resource coordination entity, the IceCube Coordination Committee.· The PY3 M&O Plan was submitted in January 2019.

· The detailed M&O Memorandum of Understanding (MoU) addressing responsibilities of each collaborating institution was revised for the collaboration meeting in Stockholm, Sweden, September 24-28, 2018.

IceCube M&O – PY3 (FY2018/2019) Milestones Status:

� Milestone Revise the Institutional Memorandum of Understanding (MOU v24.0) - Statement of Work and Ph.D. Authors head count for the spring collaboration Meeting Report on Scientific Results at the Spring Collaboration Meeting Revise the Institutional Memorandum of Understanding (MOU v25.0) - Statement of Work and Ph.D. Authors head count for the fall collaboration meeting Report on Scientific Results at the Fall Collaboration Meeting Submit for NSF approval a mid-year report which describes progress made and work accomplished based on objectives and milestones in the approved annual M&O Plan. Submit for NSF approval, a revised IceCube Maintenance and Operations Plan (M&OP) and send the approved plan to non-U.S. IOFG members. Revise the Institutional Memorandum of Understanding (MOU v26.0) - Statement of Work and Ph.D. Authors head count for the spring collaboration meeting

Engineering, Science & Technical Support – Ongoing support for the IceCube detector continues with the maintenance and operation of the South Pole Systems, the South Pole Test System, and the Cable Test System. The latter two systems are located at the University of Wisconsin–Madison and enable the development of new detector functionality as well as investigations into various operational issues, such as communication disruptions and electromagnetic interference. Technical support provides for coordination, communication, and assessment of impacts of activities carried out by external groups engaged in experiments or potential experiments at the South Pole. The IceCube detector performance continues to improve as we restore individual DOMs to the array at a faster rate than problem DOMs are removed during normal operations.

Education & Outreach (E&O)/Communications – The IceCube Collaboration has had significant outcomes from their efforts, organized around four main themes:

1) Reaching motivated high school students and teachers through Internships, IceCube Masterclasses and the University of Wisconsin-River Falls’ (UWRF) and University of Rochester’s (UR) Upward Bound programs, and creating cross-disciplinary opportunities through the LAB3 project

2) Providing intensive research experiences for teachers (in collaboration with PolarTREC) and for undergraduate students (NSF science grants and Research Experiences for Undergraduates (REU) funding) to increase STEM awareness

3) Engaging the public through various means, including web and print resources, graphic designs, a new IceCube comic, live talks, virtual reality gaming, and displays

4) Developing and implementing communication skills and diversity workshops, held semiannually in conjunction with IceCube Collaboration meetings

The 5th annual IceCube Masterclass was held in March 2018 and had over 300 participants at 20 locations, including 8 in the US. For Spring 2019, 19 institutions will participate, including 11 sites in the US. IceCube applicants to the UWRF REU programs mentioned that taking the IceCube Masterclass a few years earlier had been a major motivator for their continued astrophysics research. Both UWRF and UR provided IceCube-science–inspired summer enrichment courses for their respective Upward Bound programs. Upward Bound provides additional mentoring and skill-building activities for low-income/first-generation high school students to help prepare them for post-secondary school success. Former IceCube PolarTREC teacher Kate Miller published a video article “Sew What? Engineering Fashion in the Classroom” in the Spring 2018 issue of Kaleidoscope. 8

WIPAC high school interns working on components of an IceCube LED model.

APS and Dane County Arts Board funding was secured to support the LAB3: art & literature & physics project. Collaborative artwork inspired by physics was produced by six teams consisting a scientist, a writer, an artist, and 3-4 high school students who worked together for about four months, culminating with a public exhibit in Madison in September 2018. Currently, about two dozen Madison-area high school students are working on a new LED model of the IceCube Neutrino Observatory in a 10-week WIPAC internship program, which concludes at the end of February 2019.

Science teacher Lesley Anderson from High Tech High in Chula Vista, CA, produced a number of engaging videos available in some of her journal posts on her PolarTREC page 9 showing what is like to live and work at the South Pole based on her 2017-18 deployment with IceCube. Teacher Eric Muhs, who has worked with IceCube and its predecessor AMANDA on the UWRF Upward Bound program for 15 years, was a late replacement for 2018-19 IceCube PolarTREC educator Michelle Hall. Michelle elected to postpone her trip late in the summer, and Eric ultimately did not pass the physical qualification requirement. UWRF’s NSF astrophysics REU program selected six students for ten-week summer 2018 research experiences, including attending the IceCube software and science boot camp held at WIPAC. Multiple IceCube institutions also supported research opportunities for undergraduates. One student from the 2017 UWRF IRES program attended the IceCube Collaboration meeting in May 2018 in Atlanta and presented a poster on his research—five other posters from his fellow IRES colleagues were also displayed.

The public press conference at NSF on July 12, 2018, held in conjunction with the publication of two papers on neutrinos associated with the blazar TXS 0506+056, was an opportunity to celebrate achieving a major goal of the IceCube project—detecting the first point source of neutrinos. We promoted the press conference on social media (the top tweet had over 100,000 impressions) and supported the development of an amazing collection of graphics and videos, produced both in-house and by partners from other major agencies (NASA, DESY, etc.) inside and outside the US. IceCube worked with colleagues at the Wisconsin Institutes of Discovery and Field Day Labs, who produced a virtual reality experience for the Oculus Rift system. With the headset, users are transported to the IceCube Laboratory at the South Pole and then follow the path of a neutrino back to its source, a black hole billions of light-years away. The game, which takes about five minutes to play, has been a big hit at several venues, including the City of Science event, part of the World Science Festival in New York City, where thousands visited the IceCube booth.

IceCube continues to receive multiple requests for talks per week and works hard to provide speakers for all opportunities. IceCube collaborators had a large presence at the Polar 2018 joint meeting of the Scientific Committee on Antarctic Research and the International Arctic Science Committee held in Davos, Switzerland. IceCube had more than a dozen presentations (talks and posters) in total covering science, communication, and outreach and has collaboration members playing leadership roles in the SCAR Astronomy and Astrophysics from Antarctica Scientific Research Program.

The IceCube communication office manages press and other communication activities for both the neutrino observatory and the IceCube Collaboration. For the IceCube comic series “Rosie & Gibbs,” we published a fifth issue, which explained the role of winterovers at the South Pole. We continue to produce multimedia content for social networks, which has increased the reach of IceCube communication from a few thousand to tens of thousands on an average week and with peaks of hundreds of thousands associated with big announcements.

In 2015, IceCube launched a professional development program with a strong focus on communication and diversity. Twice per year, during the spring and fall collaboration meetings, IceCubers can participate in a communication training session and/or a workshop to discuss or share best practices for increasing diversity and inclusion in IceCube and related fields. These events are attended by 25-30 people on average and are highly valued by the target communities: early career researchers and women and allies. To sustain and expand diversity and inclusion efforts, an IceCube-led Multimessenger Diversity Network (MDN) is being developed with funds from a supplemental award to the IceCube maintenance and operations grant. A diversity coordinator for the MDN has been hired, and she has also been selected for the AAAS 2019 Community Engagement Fellows program and attended training in January 2019.

The MDN will build on the initiatives of the IceCube Neutrino Observatory and its partners in multimessenger astronomy to further develop talent for STEM research, increase diversity and inclusion in science and related fields, and connect research-based skills and technology to societal challenges. The activities will be twofold: 1) implement collaboration-wide efforts to help IceCube advance as an inclusive and open community that excels in research, fosters STEM careers in academia and industry, and engages local communities and 2) establish alliances with other multimessenger astronomy collaborations to identify common goals and develop shared strategies to broaden participation in STEM. We plan to become a member of the main INCLUDES National Network as Multimessenger@INCLUDES. The diversity fellows from IceCube, LSST, LIGO, and Veritas have met virtually and will meet face-to-face in Madison in March 2019.

Section III – Project Governance and Upcoming Events

The detailed M&O institutional responsibilities and Ph.D. author head count is revised twice a year at the time of the IceCube Collaboration meetings. This is formally approved as part of the institutional Memorandum of Understanding (MoU) documentation. The MoU was last revised in September 2018 for the Fall collaboration meeting in Stockholm, Sweden (v25.0), and the next revision (v26.0) will be posted in May 2019 at the Spring collaboration meeting in Madison, WI.

IceCube Collaborating Institutions

Following the September 2018 Fall collaboration meeting, the Mercer University with Dr. Frank McNally as the institutional lead, and Karlsruhe Institute of Technology (KIT) with Dr. Ralph Engel as the institutional lead, joined the IceCube Collaboration.

As of February 2019, the IceCube Collaboration consists of 50 institutions in 12 countries (26 U.S. and Canada, 20 Europe and 4 Asia Pacific).

The list of current IceCube collaborating institutions can be found on:

http://icecube.wisc.edu/collaboration/collaborators

IceCube Major Meetings and Events

IceCube Spring Collaboration Meeting – Atlanta, GA May 8-12, 2018

Software and Computing Advisory Panel Meeting – Madison, WI June 4-5, 2018

IceCube Fall Collaboration Meeting – Stockholm, Sweden September 24-28, 2018

International Oversight and Finance Group – Stockholm, Sweden September 28, 2018

ICNO M&O Mid-Term Review March 11, 2019

Acronym List

CPU Central Processing Unit

CVMFS CernVM-Filesystem

DAQ Data Acquisition System

DOM Digital Optical Module

E&O Education and Outreach

GPU Graphical Processing Unit

I3Moni IceCube Run Monitoring system

IC86 The 86-string IceCube Array completed Dec 2010

IceACT IceCube Air Cherenkov Telescope

IceCube Live The system that integrates control of all of the detector’s critical subsystems; also “I3Live”

IceTray IceCube core analysis software framework, part of the IceCube core software library

MoU Memorandum of Understanding between UW–Madison and all collaborating institutions

PMT Photomultiplier Tube

PnF Processing and Filtering

PQ Physical Qualification

SNDAQ Supernova Data Acquisition System

SPE Single photoelectron

SPS South Pole System

SuperDST/sDST Super Data Storage and Transfer, a highly compressed IceCube data format

TDRSS Tracking and Data Relay Satellite System, a network of communications satellites

TFT Board Trigger Filter and Transmit Board

WIPAC Wisconsin IceCube Particle Astrophysics Center

FY18/19_PY3_Annual_RPT 21