| Foreword | 2 | |

| Section I – Financial/Administrative Performance | 4 | |

| Section II – Maintenance and Operations Status and Performance | 6 |

| Detector Operations and Maintenance | 6 |

| Computing and Data Management | 12 |

| Data Release | 15 |

| Program Management | 16 |

| Section III – Project Governance and Upcoming Events | 19 | |

| Section I – Financial/Administrative Performance | |

| The University of Wisconsin–Madison is maintaining three separate accounts with supporting charge numbers for collecting IceCube M&O funding and reporting related costs: 1) NSF M&O Core account, 2) U.S. Common Fund account, and 3) Non-U.S. Common Fund account. | |

| A total amount of $6,900,000 was released to UW –Madison to cover the costs of maintenance and operations in FY2013: $914,550 was directed to the U.S. Common Fund account and the remaining $5,985,450 was directed to the IceCube M&O Core account. An additional $193,749 FY2013 funding was awarded to support an IceCube M&O supplemental proposal for cyberinfrastructure (Figure 1). | |

FY2013

|

Funds Awarded to UW

|

IceCube M&O Core account

|

$5,985,450 + 193,749

|

U.S. Common Fund account

|

$914,550

|

TOTAL NSF Funds

|

$7,093,749

|

|

Major Responsibilities

|

Funds

|

Lawrence Berkeley National Laboratory

|

|

$76,631

|

Pennsylvania State University

|

|

$37,029

|

University of California at Berkeley

|

|

$137,300

|

University of Delaware, Bartol Institute

|

|

$224,541

|

University of Maryland at College Park

|

|

$578,030

|

University of Alabama at Tuscaloosa

|

|

$50,000

|

Georgia Institute of Technology

|

|

$57,791

|

Total

|

|

$1,161,322

|

IceCube NSF M&O Award Budget, Actual Cost and Forecast

The current IceCube NSF M&O 5-year award was established on October 1, 2010, at the beginning of Federal Fiscal Year 2011. A total amount of $20,894K was awarded to the University of Wisconsin (UW) for supporting FY2011-2013.

At the end of August 2013, eleven months into FY2013, the total actual cost to date was $18,473K. Open commitments of $1,598K were encumbered to cover September 2013 salaries and fringe, purchase orders placed for computing hardware and software, and FY2013 lagging invoices from subawardee institutions. With a projection of $136K for the remaining expenses in September 2013, the estimated unspent funds at the end of FY2013 is $687K, which is 9.7% of FY2013 $7,094K awarded funds.

Figure 3 summarizes the current financial status on August 31, 2013, and the estimated balance at the end of FY2013.

(a)

|

(b)

|

(c)

|

(d)= a - b - c

|

(e)

|

(f) = d – e

|

YEARS 1-3 Budget

Oct.’10-Sep.’13 |

Actual Cost To Date through

Aug. 31, 2013 |

Open Commitments

on Aug. 31, 2013 |

Current Balance

on Aug. 31, 2013 |

Remaining Projected Expenses

in Sept. 2013 |

End of FY2013 Forecast Balance on Sept. 30, 2013

|

$20,894K

|

$18,473K

|

$1,598K

|

$823K

|

$136K

|

$687K

|

IceCube M&O Common Fund Contributions

The IceCube M&O Common Fund was established to enable collaborating institutions to contribute to the costs of maintaining the computing hardware and software required to manage experimental data prior to processing for analysis.

Each institution contributed to the Common Fund, based on the total number of the institution’s Ph.D. authors, at the established rate of $13,650 per Ph.D. author. The Collaboration updates t he Ph.D. author count twice a year before each collaboration meeting in conjunction with the update to the IceCube Memorandum of Understanding for M&O.

The M&O activities identified as appropriate for support from the Common Fund are those core activities that are agreed to be of common necessity for reliable operation of the IceCube detector and computing infrastructure and are listed in the Maintenance & Operations Plan.

Figure 4 summarizes the planned and actual Common Fund contributions for the period of April 1, 2012–March 31, 2013, based on v12.0 of the IceCube Institutional Memorandum of Understanding, from March 2012.

Ph.D. Authors

|

Planned Contribution

|

Actual Received

|

||

Total Common Funds

|

124

|

$1,692,600

|

$1,690,053

|

|

U.S. Contribution

|

67

|

$914,550

|

$914,550

|

|

Non-U.S. Contribution

|

57

|

$778,050

|

$775,573

|

|

Section II – Maintenance and Operations Status and Performance

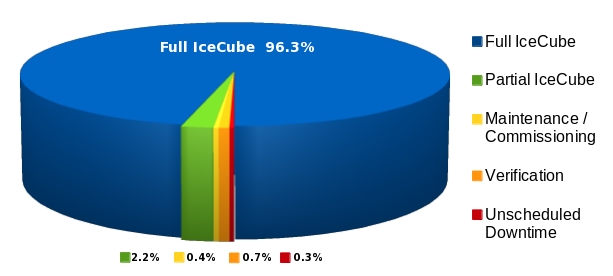

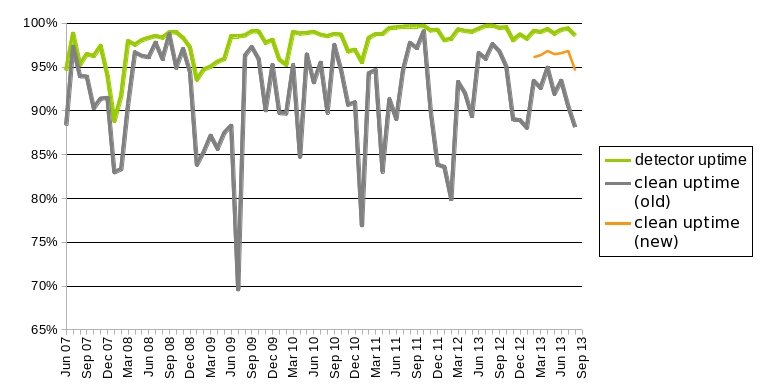

Detector Operations and Maintenance

The IceCube Data Acquisition System (DAQ) has reached a stable state, and consequently the frequency of software releases has slowed to the rate of 1–2 per year. Nevertheless, the DAQ group continues to develop new features and patch bugs. During the reporting period of October 2012–September 2013, the following accomplishments are noted:

· Activation of the hit spooling feature of DAQ, whereby the raw, subtrigger data streams from all DOMs are temporarily stored on disk for time intervals up to hours. More information on this important feature is described further on in this section.

· Delivery of new DOM mainboard release (445) to correct pedestal jitter and improve rejection of light contamination during the pedestal calibration sequence at the beginning of each run. Additionally, this release of the mainboard software and firmware includes support for streamlined messaging with the DOMHub that improves the data bandwidth slightly.

· Delivery of a new release (“Capital”) of DAQ, which includes support for immediate run switching in the typical circumstances in which run configuration does not change. This feature (“stopless runs”) is not activated yet, as we are finalizing end-to-end verification on the northern test system.

·

Development a new release (“Furthermore”) of DAQ, which includes performance enhancements in the IceCube trigger system by spreading the multiple trigger algorithms across many execution threads, thus benefiting from the multicore architectures used in the DAQ computing platforms. Initial tests of the Furthermore release candidates were executed on June 1, 2013. However, these candidates did not pass the verification stage due to a subtle difference in formation of the event readout windows. This bug has been addressed, and the DAQ group is preparing another release candidate.

The single-board computer (SBC) in the IceCube DOMHubs was also previously identified as a bottleneck in DAQ processing. Ten DOMHubs at SPS were upgraded during the 2012/13 polar season with new Advantech D525 SBCs. Initial performance of the new hubs is excellent, and we plan to upgrade the remaining hubs during the 2013-14 season. Additionally, new hard disk drives will be installed in all upgraded hubs, increasing the buffer space for HitSpooling (see below). We continue research and development efforts in a new design of the hub architecture for increased rack density, reduced power consumption and ease of maintenance.

HitSpooling, a DAQ feature under development since 2011, extends the data collection buffers of the IceCube DOMHubs from O(10) seconds to about 3 hours. It uses the DOMHub readout computers’ hard disk drives to store the raw data stream from each DOM channel. These streams are written to a set of files organized as circular buffers at the rate of approximately 2 MB/sec per hub. Depending on the DOMhubs’ hard disk size, the buffer time is up to 16 hours.

The primary client for these data is the IceCube supernova online trigger (SNDAQ). The SNDAQ collects scaler data to detect the neutrino burst of a Galactic core-collapse supernova; however, this trigger is formed several minutes after the DOM hit data has been collected, and consequently the contents of the RAM buffers read out by the mainline DAQ trigger system have long been cleared by that time to make room for new incoming data. With HitSpooling, the SNDAQ can now request data from the extended buffers and use this data to remove correlated noise from cosmic ray muons to improve trigger sensitivity, gain additional information on the supernova neutrino shockwave, and partially recover data from a very nearby supernova even in the case of a DAQ crash.

When the HitSpool-Interface receives a request, it collects the corresponding buffered data from all DOMHubs and stores it on a central machine at SPS from where it will be sent to the north via SPADE. The average dataload is ~10GB for a standard request of 90 seconds of data from all DOMHubs. From the central storage at SPS, the data are transferred via satellite and stored in the data warehouse, where an automated preprocessing service handles them further. The DAQ-side of the HitSpooling architecture has been running since January 2013. The infrastructure to handle requests, the HitSpool-Interface, was activated on April 1, 2013. Since then it has been running stably and has already automatically processed several requests triggered from the SNDAQ client.

The SNDAQ found that 98.0% of the available data from September 2, 2012, through September 9, 2013, met the minimum analysis criteria for run duration and data quality. The trigger downtime was 1.7 days (0.5% of the time interval) from physics runs under 10 minutes in duration.

Supernova candidates in these short runs can be recovered offline should the need arise.

A new SNDAQ version ("Morion") was installed on January 24, 2013, and additional fixes improving the communication between the SNDAQ and I3Live were installed one month later. The improvements include the real-time transmission of process latency to I3Live, a backup ring buffer to store 24 hours of raw data files for data checks and transmission of raw data in case of a serious alert, a redesigned logging mechanism, and the identification and removal of three sources of occasional SNDAQ crashes. No SNDAQ crashes were recorded since the Morion release. The SNDAQ HitSpool-Interface is fully functional. So far, three events exceeding an adjustable threshold have been transmitted automatically. A new version of the SNDAQ (“Kabuto”), incorporating the full list of quantities requested to be monitored by I3Moni 2.0, as well as additional streamlining of the code, is scheduled to be installed by October 2013.

The dominant fraction of SNDAQ false alarms can be assigned to a positive fluctuation in the number of atmospheric muons that enter the detector during the time of the alert. The effect is more pronounced in the Antarctic summer due to the warmer and less dense atmosphere, leading to a larger rate of cosmic ray muons. These muons are identified in the Level 1 processing and manually analyzed offline on satellite-transmitted data, leading to a substantial delay. Code is currently being tested to automate this procedure and to produce corresponding monitoring histograms. A plan has been developed to subtract hits associated with triggered muons at the South Pole in real time. The plan is to provide a robust system within one year such that the SNEWS trigger threshold can be substantially reduced to increase the supernova detection efficiency and range. Furthermore, seasonal changes of the alarm rate would be avoided.

The online filtering system performs real-time reconstruction and selection of events collected by pDAQ and sends them for transmission north via the Data Movement system. Version V13-04-01 is currently deployed in support of the IC86-2013 physics run. Efforts for the past year included supporting the transition of online filters to the IC86-2013 physics run, test run and final configurations, releases V13-04-00, V13-04-00, and V13-04-01, respectively. Software system work continues on two efforts for the online filtering system: first, preparing software releases for the planned upgrade of the SPS computing system and, second, finishing development efforts in support of the I3Moni 2.0 effort, where data-quality monitoring values are now available for direct reporting to the I3Live system.

A weekly calibration call keeps collaborators abreast of issues in both in-ice and offline DOM calibration. An effort is underway to refine the gain calibration using muon data, which are collected continuously throughout the year. The gain calibration improvement uses individual fits to the single photoelectron (SPE) peak of each DOM; these fits have been collected during summer 2013 and the waveform calibration software is being updated to use the fit results. Ongoing efforts are directed to improve our knowledge of the absolute sensitivity of the DOMs in the ice. This is done both using muon data as well as by laboratory measurements. Online verification software is being merged into the upcoming I3Moni 2.0 framework. Software modules have been written that output the SPE distributions and baselines in the I3Moni 2.0-compliant format. Flasher data continues to be analyzed to reduce ice model systematics, with a major focus on the hole ice, low brightness flasher data, and multiwavelength flashers.

A procedure for tracking IceTop snow depths between physical measurements was established last year and is working well. Snow depths are physically measured at the South Pole three times during each season: once soon after station opening, once after grooming, and once soon after station closing. The plan for snow management is reviewed with South Pole station management each season. We are working to integrate IceTop and related environmental monitoring functions into IceCube Live.

IC86 Physics Runs – The third season of the 86-string physics run, IC86-2013, began on May 2, 2013. Online improvements include a mild reduction of the filtered rate and an improved HitSpooling system. Preparations for the IC86-2014 physics run are starting, with updated filter settings, DOM calibrations, and ongoing HitSpooling support. DAQ trigger settings are not changing from IC86-2013. Two DOMs (0.04%) failed during IC86-2012, and six were retested during the pole season and returned to data-taking for IC86-2013. Thus the total number of active DOMs at the end of the IC86-2012 run (May 2013) has increased by 4 to a total of 5404.

TFT Board – The TFT board is in charge of adjudicating SPS resources according to scientific need, as well as assigning CPU and storage resources at UW for mass offline data processing (a.k.a. Level 2). The IC86-2013 season was the first instance in which L2 processing was handled by the TFT from beginning to end. The result is a dramatic reduction in the latency for processing of L2. For the IC86-2011 season, L2 latency was several months after the end of the data season. For IC86-2013, the latency is 2–4 weeks after data-taking.

Working groups within IceCube will submit approximately 20 proposals requesting data processing, satellite bandwidth and data storage, and the use of various IceCube triggers for IC86-2014. Sophisticated online filtering data selection techniques are used on SPS to preserve bandwidth for other science objectives. Over the past three years, new data compression algorithms (SuperDST) have allowed IceCube to send a larger fraction of the triggered events over TDRSS than in previous seasons. The additional data enhances the science of IceCube in the study of cosmic ray anisotropy and the search for neutrino sources toward the Galactic Center.

The average TDRSS data transfer rate during IC86-2012 was 100 GB/day plus an additional 5 GB/day for use by the detector operations group. IceCube is a heavy user of the available bandwidth, and we will continue to moderate our usage without compromising the physics data. During austral summer 2012-13, the bandwidth usage by IceCube exceeded its allocation by about 5 GB/day (about a 5% overuse). The data rate in IceCube is intrinsically variable with the seasons because the muon rate underground depends on the upper atmospheric temperature. The summer excess bandwidth usage was caused by an inaccurate estimate of the seasonal effects in the new SuperDST data format. NSF temporarily increased IceCube’s allocation to 110 GB/day. Filter usage in IC86-2013 has been adjusted accordingly so that IceCube remains within its bandwidth allocation of 105 GB/day. However, minor uncertainties remain, as IceCube data rates are sensitive to weather.

SuperDARN – The SuperDARN radar at the South Pole went online in January 2013. SuperDARN coordinated its initial activities with IceCube personnel on station at the time and is providing time-stamped logs of its radio transmissions. Several analyses have searched for any radio-frequency interference in IceCube, including in the potentially sensitive analog time-calibration system (RAPCal); no adverse effects have been observed.

Personnel –

The detector operations manager, Denise Laitsch, retired in October 2012; this position was filled by the deputy operations manager, John Kelley. The IceCube Run Coordinator duties transitioned from Sebastian Böser to postdoctoral researcher Matt Kauer in May 2013.

Computing and Data Management

Computing Infrastructure – IceCube computing and storage systems, both at the Pole and in the north, have performed well over the past year. An expansion of 120 TB (terabytes) in the storage space for analysis data and of 30 TB in the user space were completed in May 2013. Additional 30-TB expansions for each of these two storage areas are currently in progress. The total disk storage capacity in the data warehouse is 2.7 PB (petabytes): 1.1 PB for experimental data, 1.3 PB for simulation, 270 TB for analysis, and 119 TB for user data. The procurement of a new disk expansion is ongoing, with the goal of increasing the overall warehouse capacity by at least 300 TB. The plan is to have this extra space online before the end of 2013.

In October 2012, the IceCube Collaboration approved the use of direct photon propagation for the mass production of simulation data. The direct propagation method requires the use of GPU (graphical processing units) hardware to deliver adequate performance. A GPU-based cluster, named GZK-9000, was installed in early 2012, and it has been extensively used for simulation production since then. The GZK-9000 cluster contains 48 NVidia Tesla M2070 GPUs. It is estimated that 300 of these GPUs will be needed to enable the production of one year of detector live-time simulation in a timely manner. A new GPU cluster containing 32 NVidia GeForce GTX-690 and 32 AMD ATI Radeon 7970 GPUs was purchased and is currently being deployed at UW–Madison. The estimated power of this new facility is equivalent to four times that in the existing GZK-9000 cluster. A number of other sites in the collaboration are planning to deploy GPU clusters in the next months to reach the overall simulation production capacity goal.

A complete upgrade of the SPS servers is planned for the 2013-14 austral summer, since the current servers are in their third season of use and at the end of their planned lifecycle. This server upgrade will also address some performance issues that have been detected in parts of the system when operating under high trigger rate conditions. The new servers were received in June and have undergone extensive testing and burn-in, as execute nodes of the cluster, for more than two months. The SPTS servers have been replaced and made available to the detector operations team for testing the online applications on the new SL6.3 operating system. Besides the server replacement, a prototype system that will archive data to disk instead of tape will be deployed in the 2013-14 season. This system will operate in parallel with the existing tape-based system and will make use of the new JADE software, which notably improves the scalability and configurability of the current SPADE software. This prototype will provide direct experience with the handling of disks and the procedures necessary to operate such a system. If it is successful, the tape-based system will be retired in the 2014-15 season. Finally, we also plan to replace the remaining HP UPS systems during the 2013-14 season.

At the end of 2012, the IceCube Collaboration agreed to use the compressed SuperDST as the new long-term archival format for IceCube data. This will significantly reduce the capacity requirements for the archive while keeping all the relevant physics information in the data at the same time. The decision taken was that this change will be implemented from the IC86-2011 run onwards. This means that the RAW data for IC86-2011, IC86-2012, and part of IC86-2013 will need to be read back from tape to generate a complete set of SuperDST. The setup for this operation is currently being finalized and has been running in the background at the UW–Madison facilities. About 16% of IC86-2012 has been processed as of mid-September 2013.

A series of actions are planned with the goal of facilitating access to and use of Grid resources by the collaboration members. With this objective, a CernVM-Filesystem (CVMFS) repository has been deployed at UW–Madison hosting the IceCube offline software stack and photonic tables needed for data reconstruction and analysis. CVMFS enables seamless access to the IceCube software from Grid nodes by means of HTTP. High scalability and performance can be accomplished by deploying a chain of standard web caches. The system is currently being exercised with simulation production, and the plan is to extend it to analysis users and make it available in as many sites as possible, both within and outside the United States.

Data Movement – Data movement has performed nominally over the past year. Figure 7 shows the daily satellite transfer rates in GB/day through August 2013. The IC86 filtered physics data are responsible for 96% of the bandwidth usage.

IC86-2013

Run start

![]()

Data Release

IceCube Open Data: http://icecube.umd.edu/PublicData/I3OpenDataFormat.html

IceCube Policy on Data Sharing: http://icecube.umd.edu/PublicData/policy/IceCubeDataPolicy.pdf

AMANDA 7 Year Data: http://icecube.wisc.edu/science/data/amanda

IceCube String 22–Solar WIMP Data: http://icecube.wisc.edu/science/data/ic22-solar-wimp

IceCube String 40 Data:

http://icecube.wisc.edu/science/data/ic40

Program Management

· The FY2013 M&O Midyear Report was submitted in March 2013.

· The detailed M&O Memorandum of Understanding (MoU) addressing responsibilities of each collaborating institution is currently being revised for the collaboration meeting in Munich, Germany, October 8–12, 2013.

·

Computing resources are managed to maximize uptime of all computing services and to ensure the availability of required distributed services, including storage, processing, database, grid, networking, interactive user access, user support, and quota management.

·

NSF provided funding of $6,900,000 to UW–Madison to support M&O activities in FY2013. An additional $193,749 of FY2013 funding was awarded to support an IceCube M&O supplemental proposal for cyberinfrastructure: software development required to modify the IceCube data acquisition, processing, and transmission software to create data pipelines, making near real-time neutrino events available to other facilities (financial details in Section I).

IceCube M&O - FY201 3 Milestones Status

� Milestone Provide NSF and IOFG with the most recent Memorandum of Understanding (MoU) with all U.S. and foreign institutions in the collaboration. MoU v13.0

Annual South Pole System hardware and software upgrade. Submit a midyear report with a summary of the status and performance of overall M&O activities, including data handling and detector systems. Revise the institutional Memorandum of Understanding Statement of Work and PhD Authors Head Count for the spring collaboration meeting. MoU v14.0

Report on scientific results at the spring collaboration meeting. Provide NSF and IOFG with the most recent Memorandum of Understanding (MoU) with all U.S. and foreign institutions in the collaboration. MoU v14.0

Midterm NSF IceCube M&O Review Submit an annual report which describes progress made and work accomplished based on M&O objectives and milestones. Revise the institutional Memorandum of Understanding Statement of Work and PhD Authors Head Count for the fall collaboration meeting. MoU v15.0

Report on scientific results at the fall collaboration meeting. Annual detector uptime self assessment.

Engineering, Science & Technical Support – Ongoing support for the IceCube detector continues with the maintenance and operation of the South Pole Systems, the South Pole Test System, and the Cable Test System. The latter two systems are located at the University of Wisconsin–Madison and enable the development of new detector functionality as well as investigations into various operational issues, such as communication disruptions and electromagnetic interference. Technical support provides for coordination, communication, and assessment of impacts of activities carried out by external groups engaged in experiments or potential experiments at the South Pole. The IceCube detector performance continues to improve as we restore individual DOMs to the array at a faster rate than problem DOMs are removed during normal operations.

Software Coordination – A review panel for permanent code was assembled for the IceTray-based software projects and to address the long-term operational implications of recommendations from the Internal Detector Subsystem Reviews of the online software systems. The permanent code reviewers are working to unify the coding standards and apply these standards in a thorough and timely manner. The internal reviews of the online systems mark an important transition from a development mode into steady-state maintenance and operations. The reviews highlight the many areas of success as well as identify areas in need of additional coordination and improvement.

Work continues on the core analysis and simulation software to rewrite certain legacy projects and improve documentation and unit test coverage. Recent IceTray improvements have focused on increasing portability, reducing the number of external requirements to use the software, and easing version requirements of those external packages. A new release of the core simulation software, IceSim 4, is in the final testing/release candidate phase. A new event viewer was released fur use in the collaboration in spring 2013.

Education & Outreach (E&O) – IceCube collaborators continue to engage national and international audiences in a multitude of venues and activities. We provide noteworthy examples below followed by exciting aspects of the growing IceCube E&O program made possible with the development of new activities and partnerships.

Spring 2013 was very busy, beginning with the inaugural meeting of the E&O advisory panel, followed by the IceCube Science Advisory Committee meeting, the IceCube Collaboration meeting, a WIPAC-hosted IceCube Particle Astrophysics meeting, and finally the NSF Maintenance and Operations midyear grant review—all in Madison. IceCube in general and the E&O efforts in particular were very positively reviewed by the Science Advisory Committee and the NSF review panel. At the IceCube Collaboration meeting, Education and Outreach was covered in a plenary talk, in a dedicated parallel session, and in a general-audience evening event that drew a crowd of several hundred.

There are many ongoing activities that engage both formal and informal audiences, ranging from local to international in scope. Provided below are summaries of three select projects that will contribute significantly to our goal of involving a larger fraction of the collaboration in E&O efforts.

· First, meetings have been held with potential partners to develop activities involving IceCube science for a Master Class for high school students. Plans are to give a presentation at the Fall collaboration meeting in Munich in October, 2013, with the goal of offering the Master Class to students in the spring of 2014.

· Second, planning and preparations are underway for a high-profile panel discussion: Transdisciplinary Collaboration in ArtScience—Exploring New Worlds, Realizing the Imagined. The event will take place at the Deutsches Museum in Munich during the Fall IceCube Collaboration meeting.

· Third, the IceCube fulldome video show is progressing with our partners at the Milwaukee Public Museum. A meeting was held in July with the Milwaukee Public Museum marketing group to discuss plans for publicizing the show. The premiere is tentatively scheduled for November 21, 2013. In addition, the show will be marketed to IceCube collaborators to encourage them to work with their local or regional planetarium to host the show. We are particularly excited about the potential the fulldome video has to bring the collaboration together and generate significant audiences in Wisconsin and beyond.

An NSF IRES proposal was submitted to send 18 US IceCube undergraduate students to European IceCube collaborating institutions for 10-week summer research experiences. If funded, we would support four students in the summer of 2014, six in 2015, and eight in 2016.

Section III – Project Governance and Upcoming Events

The detailed M&O institutional responsibilities and Ph.D. author head count is revised twice a year at the time of the IceCube Collaboration meetings. This is formally approved as part of the institutional Memorandum of Understanding (MoU) documentation. The MoU was last revised in May 2013 for the Spring collaboration meeting in Madison, WI (v14.0), and the next revision (v15.0) will be posted in October 2013 at the Fall collaboration meeting in Munich, Germany.

IceCube Collaborating Institutions

Following the May 2013 Spring collaboration meeting, Sungkyunkwan University (SKKU) joined the IceCube Collaboration; the institutional lead is Dr. Carsten Rott.

As of September 2013, t he IceCube Collaboration consists of 39 institutions in 11 countries (16 U.S. and 23 Europe and Asia Pacific).

The list of current IceCube collaborating institutions can be found on:http://icecube.wisc.edu/collaboration/collaborators

IceCube Major Meetings and Events

Cosmic Ray Anisotropy Workshop – Madison, WI September 26–28, 2013

IceCube Fall Collaboration Meeting – Munich, Germany October 8–12, 2013

Exploring New Worlds, Realizing the Imagined (outreach event) –

Deutsches Museum, Munich, Germany October 9, 2013

Acronym List

CVMFS CernVM-Filesystem

DAQ Data Acquisition System

DOM Digital Optical Module

GRL Good Run List

IceCube Live The system that integrates control of all of the detector’s critical subsystems; also “I3Live”

IceTray IceCube core analysis software framework, part of the IceCube core software library

MoU Memorandum of Understanding between UW–Madison and all collaborating institutions

pDAQ IceCube’s Data Acquisition System

SBC Single-board computer

SNDAQ Supernova Data Acquisition System

SPE Single photoelectron

SPS South Pole System

SPTS South Pole Test System at UW–Madison

SuperDST Super Data Storage and Transfer, a highly compressed IceCube data format

TDRSS Tracking and Data Relay Satellite System, a network of communications satellites

TFT Board Trigger Filter and Transmit Board

UPS Uninterruptable Power Supply

WIPAC Wisconsin IceCube Particle Astrophysics Center

FY13_Annual_RPT 2